June 17, 2025 - Comments Off on May 2025 Newsletter: Research on Misinformation, Geo-Blocking & TFGBV Post Pahalgam Attack and During Indo-Pak Escalations

May 2025 Newsletter: Research on Misinformation, Geo-Blocking & TFGBV Post Pahalgam Attack and During Indo-Pak Escalations

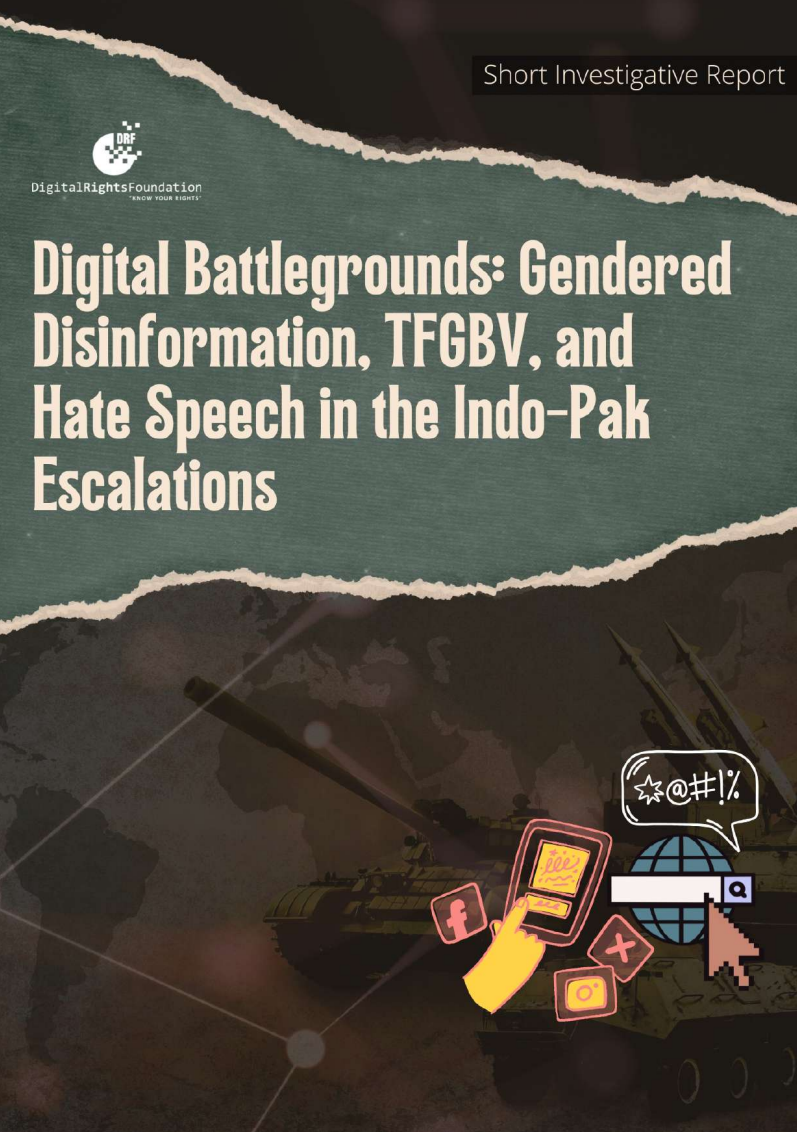

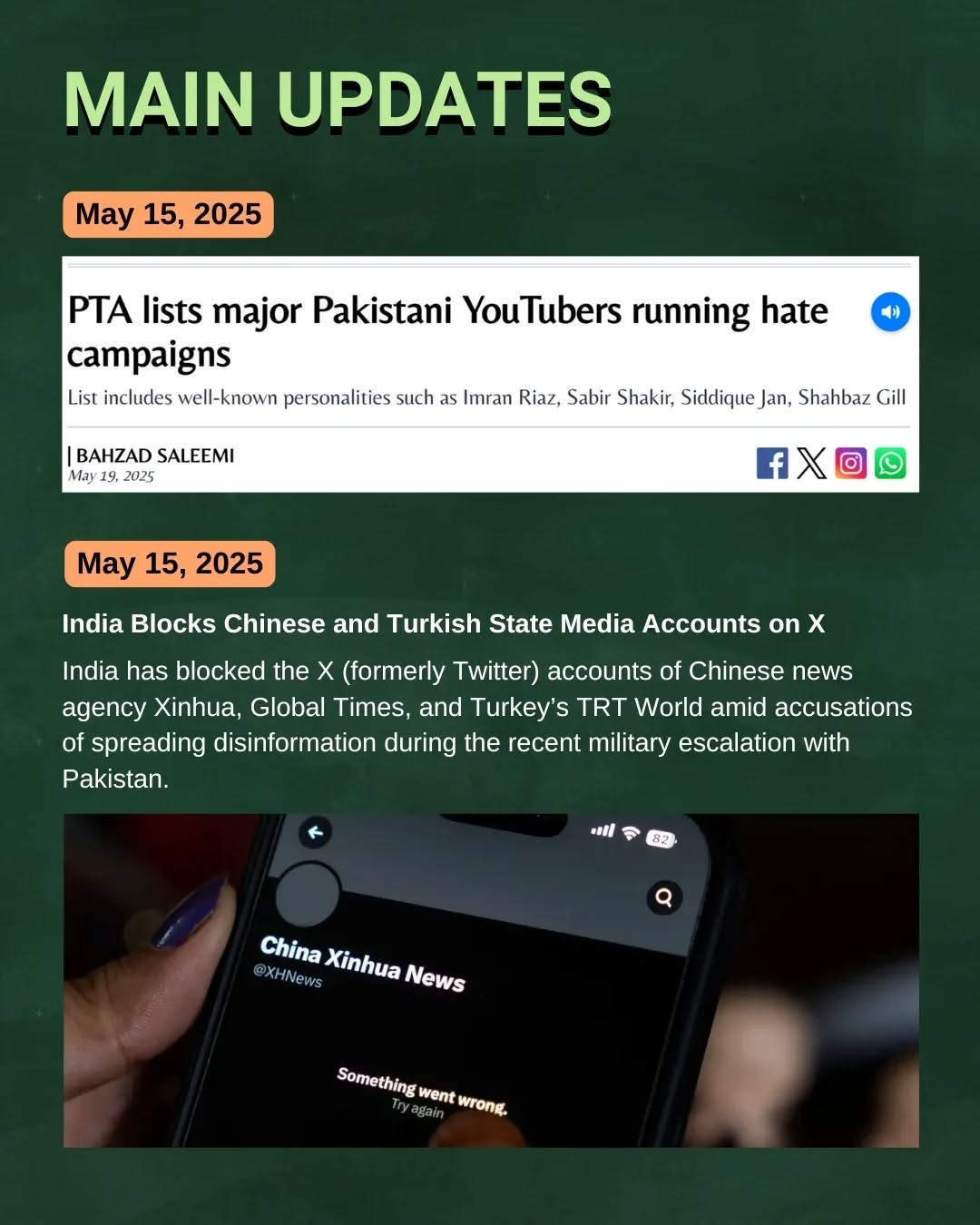

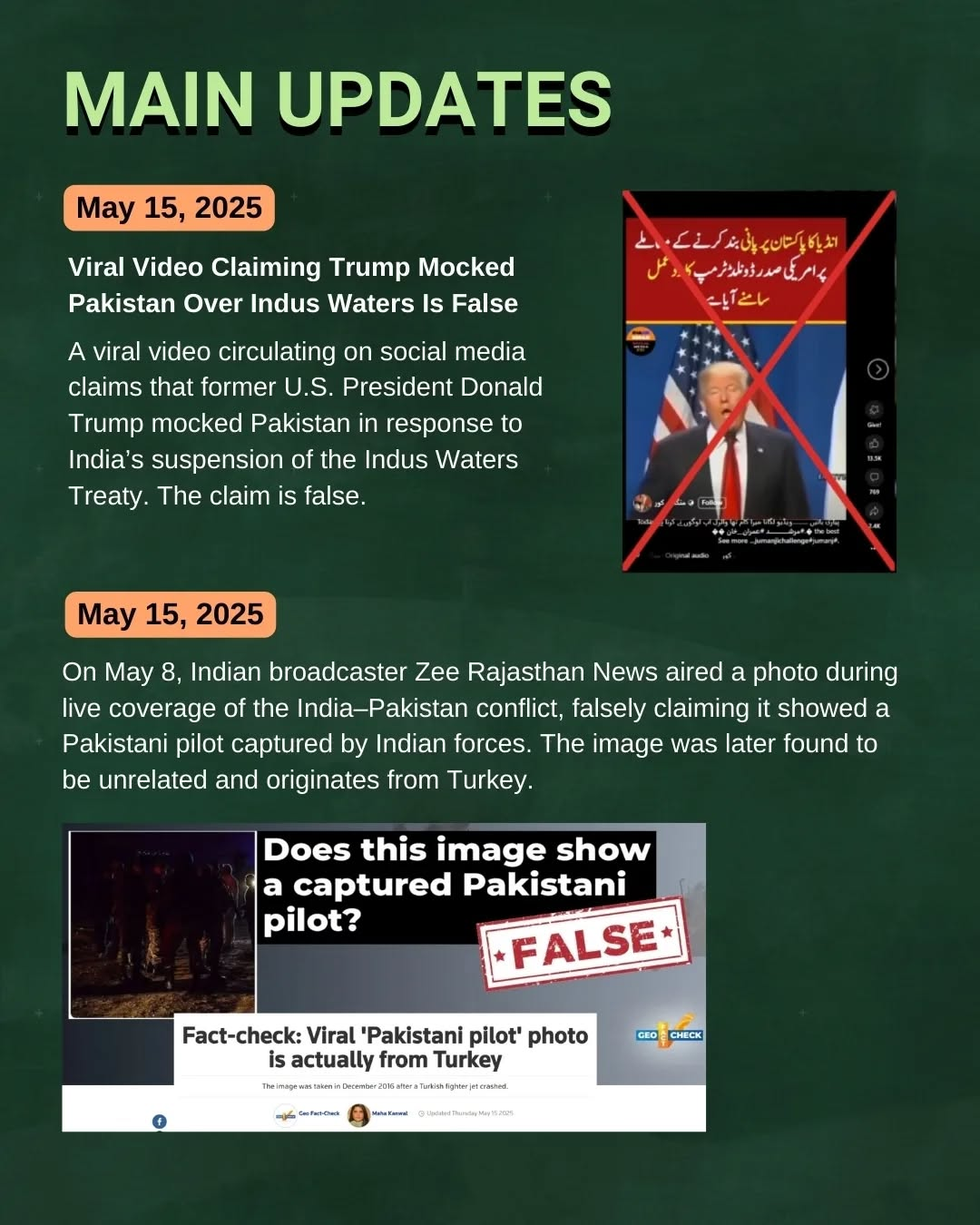

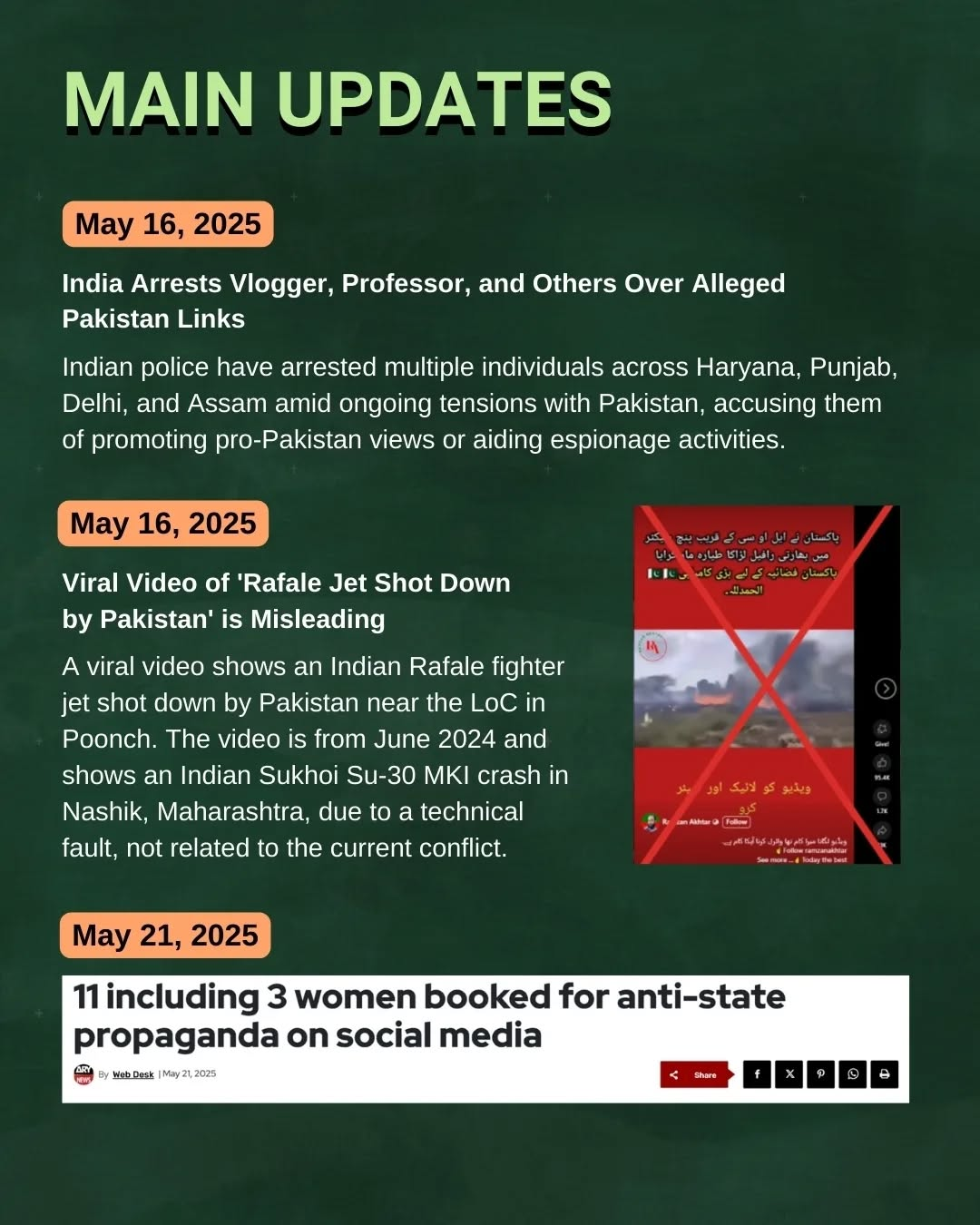

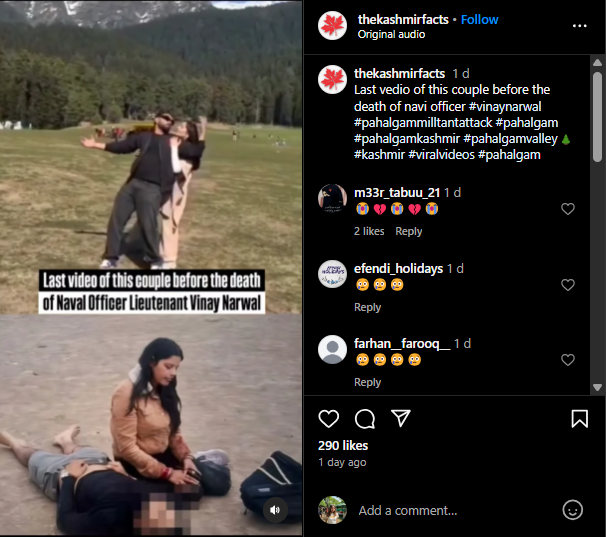

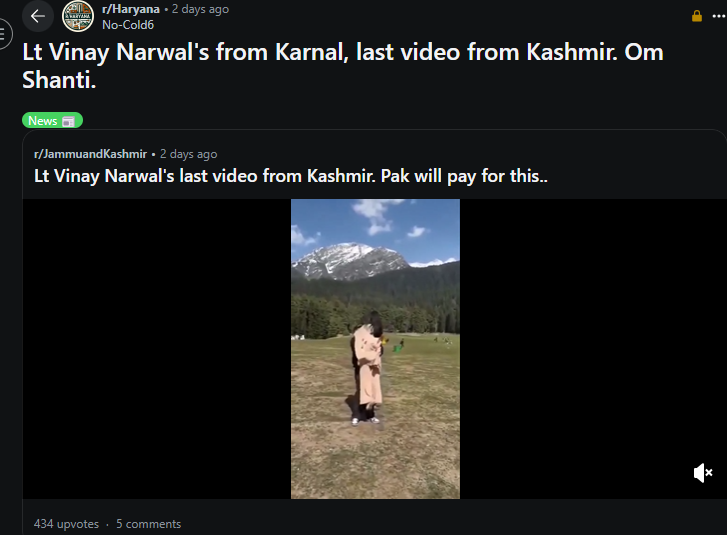

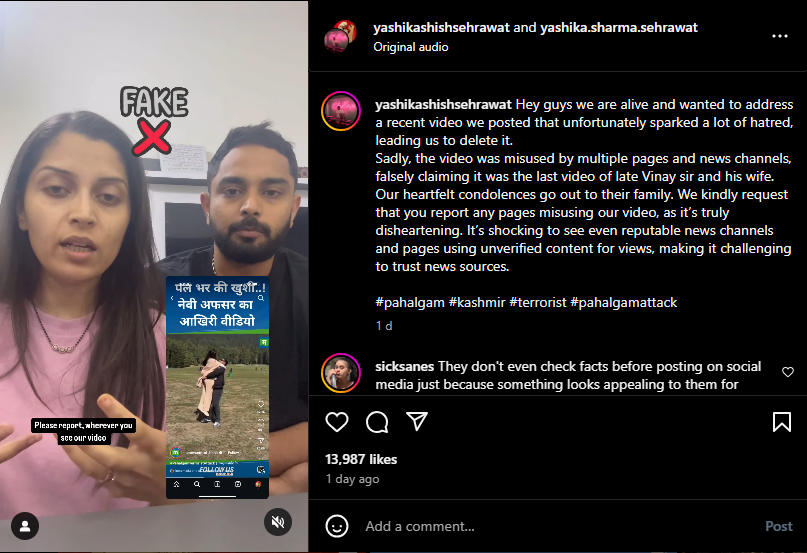

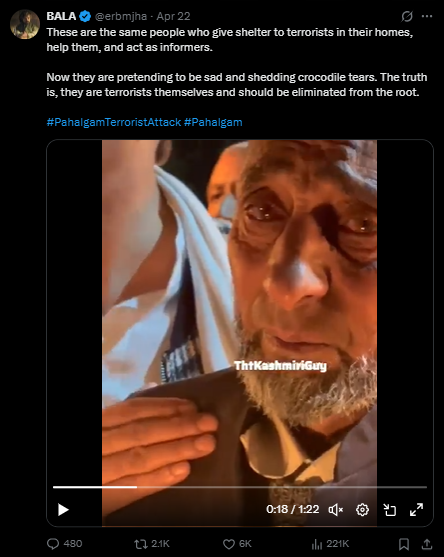

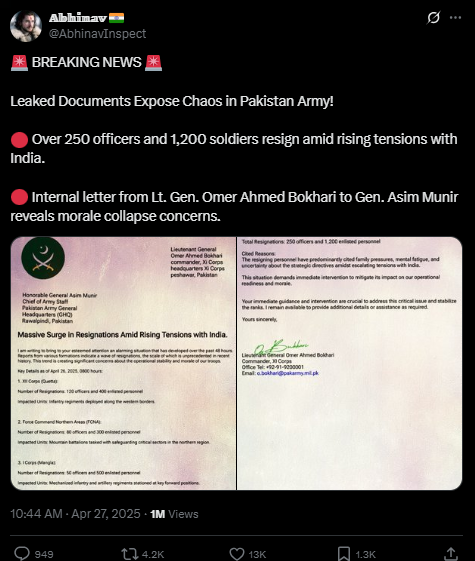

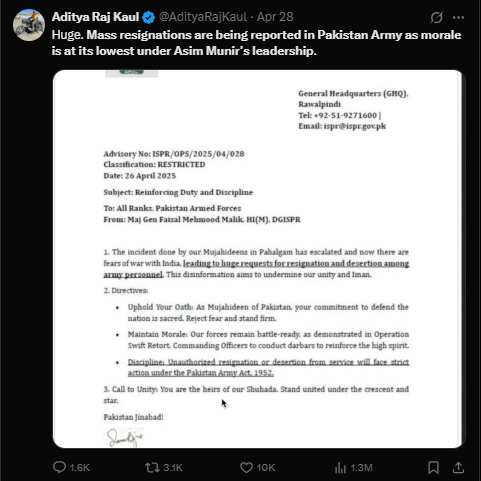

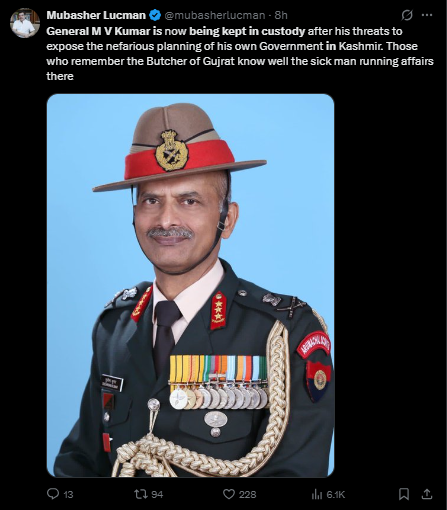

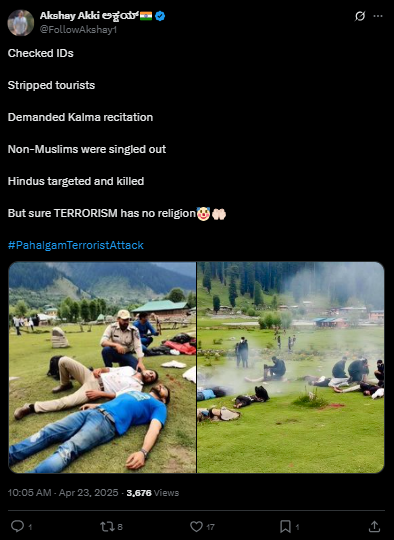

In the wake of the Pahalgam attack, DRF analysed 5 major misinformation campaigns and 4 viral hate speech trends to showcase how social media plays a role in spreading inflammatory content. DRF also collected data during the Indo-Pak escalations to reveal a troubling trend of geo-blocking and censorship on the other side of the border, raising human rights concerns and inconsistent platform moderation policies. These findings were released in the form of a short investigative report with an online explainer.

Finally, DRF analysed 295 unique posts pertaining to the escalations across 5 platforms, and discovered 25% of these were directly connected to gendered disinformation, TFGBV, and gendered hatespeech, identifying 5 key content categories of platform-enabled misogyny on both sides of the border. These findings were released as a report with an online explainer.

Regional Engagements & Initiatives

Nighat Dad at Online Panel Discussion by CSOH & St. The London Story

Nighat Dad at Online Panel Discussion by CSOH & St. The London Story

DRF ED Nighat Dad spoke on a panel discussion titled “The Digital Warfare Between India and Pakistan on 22 May. The panel highlighted the rise of online hate, misinformation, and disinformation after the Pahalgam attack and outbreak of hostilities between India and Pakistan.

Our Latest Research & Advocacy

Second Issue of Digital 50.50 Launched

This issue spotlights the alarming nexus between tech platforms and bad actors to suppress free speech online, threatening journalist safety and human rights across digital spaces. Given the recent Indo-Pak escalations with cyber warfare and disinformation campaigns, this issue strives to highlight how media, civil society, and digital rights advocates must reclaim and protect online spaces from such threats.

DRF Releases Annual Report 2024

DRF Releases Annual Report 2024

In 2024, DRF continued its mission to advance digital rights and online freedoms across South Asia and the Global Majority. From addressing pressing digital rights challenges to amplifying critical conversations on AI governance and data privacy, our work has remained at the forefront of change. Our 2024 annual report captures a year of progress, resilience, and impact in our ongoing mission to create a safer, more inclusive digital landscape.

World Press Freedom Day

For International World Press Freedom Day, DRF drew attention to Pakistan’s latest ranking in the World Press Freedom Index 2025, i.e., 158/180. This ranking is a drop of six places from Pakistan’s ranking in 2024. Read our analysis on the shrinking space for press freedom here.

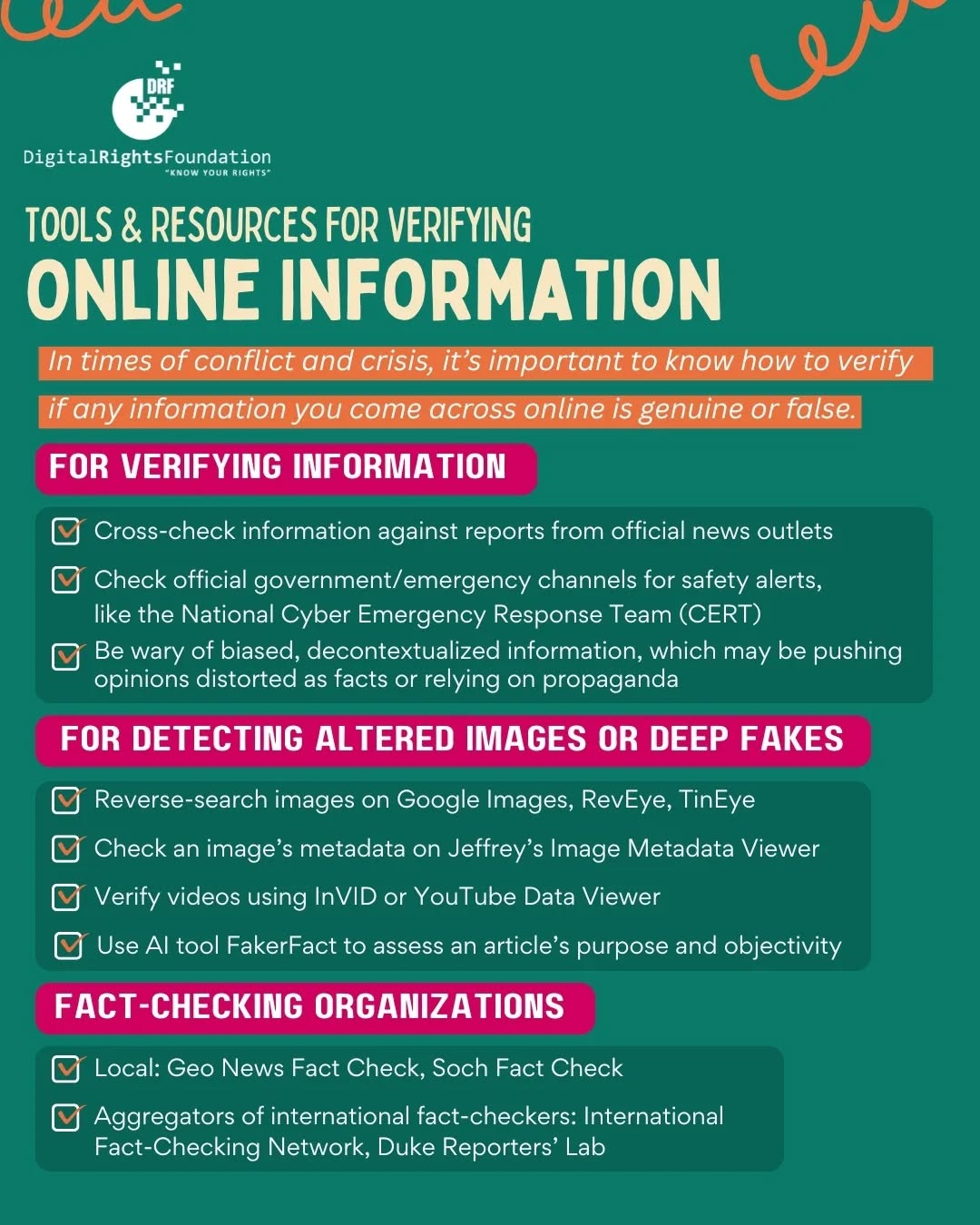

Resources and Toolkits During Indo-Pak Escalations

Given the rampant misinformation, digital security threats and psychological overwhelm citizens experienced during the Indo-Pak escalations, DRF issued several resource lists and toolkits to support citizens (as well as journalists) during this precarious period.

The State of Free Speech in Pakistan

According to this year’s Future of Free Speech Index, a report by The Future of Free Speech measuring free speech support across 33 countries, Pakistan ranked among the lowest in terms of support for free speech in 2024. The findings also reveal higher support for certain types of free speech compared to 2021.

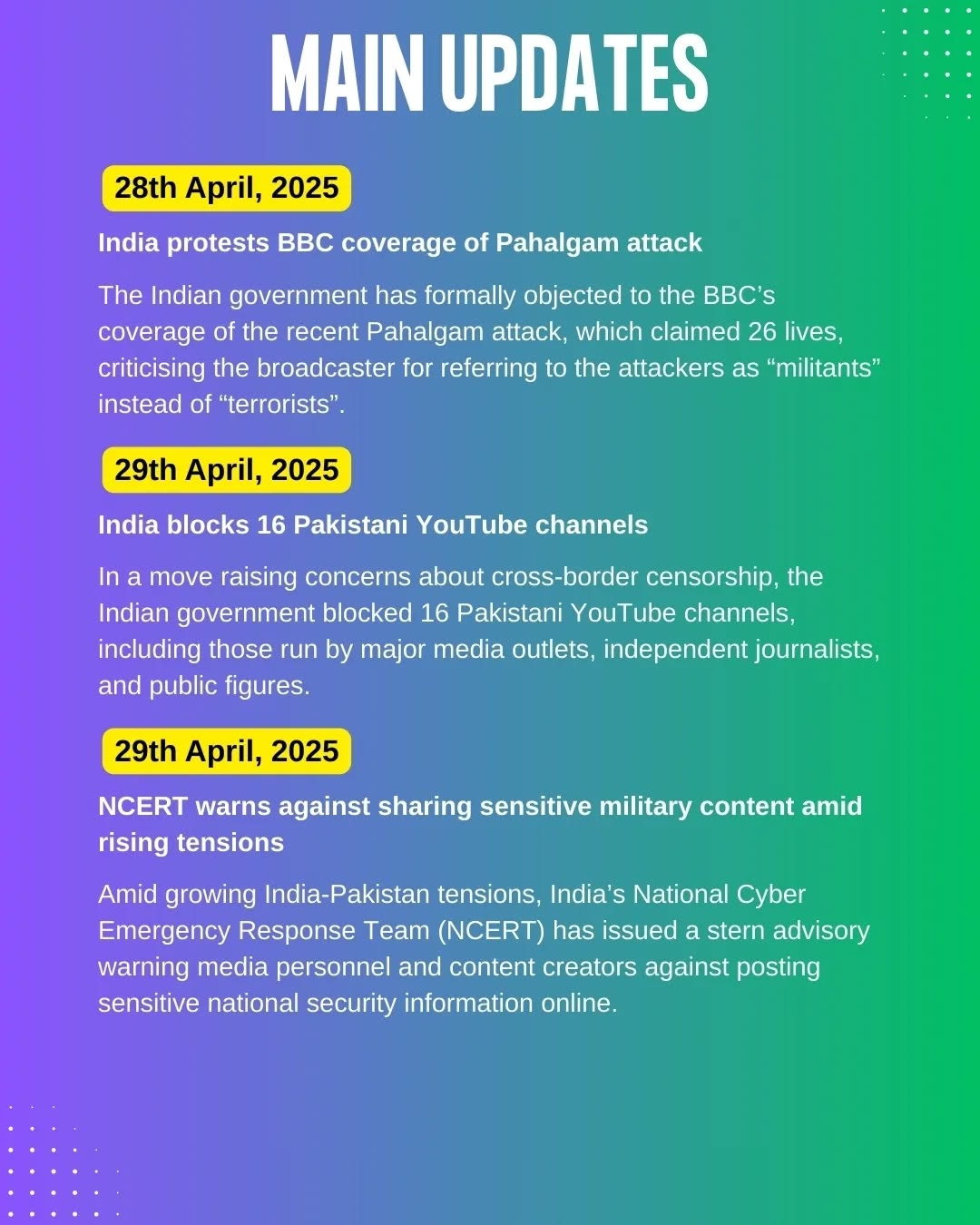

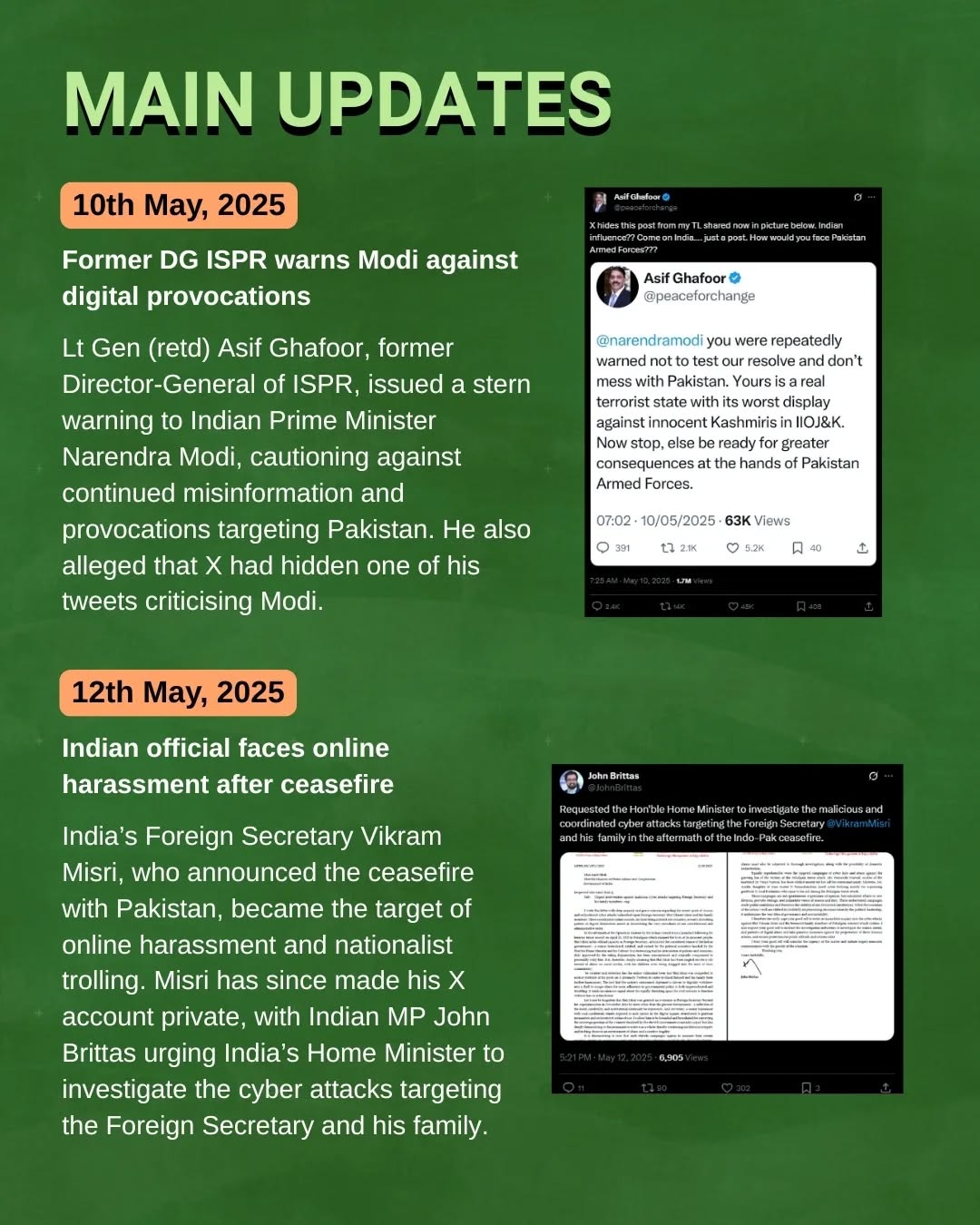

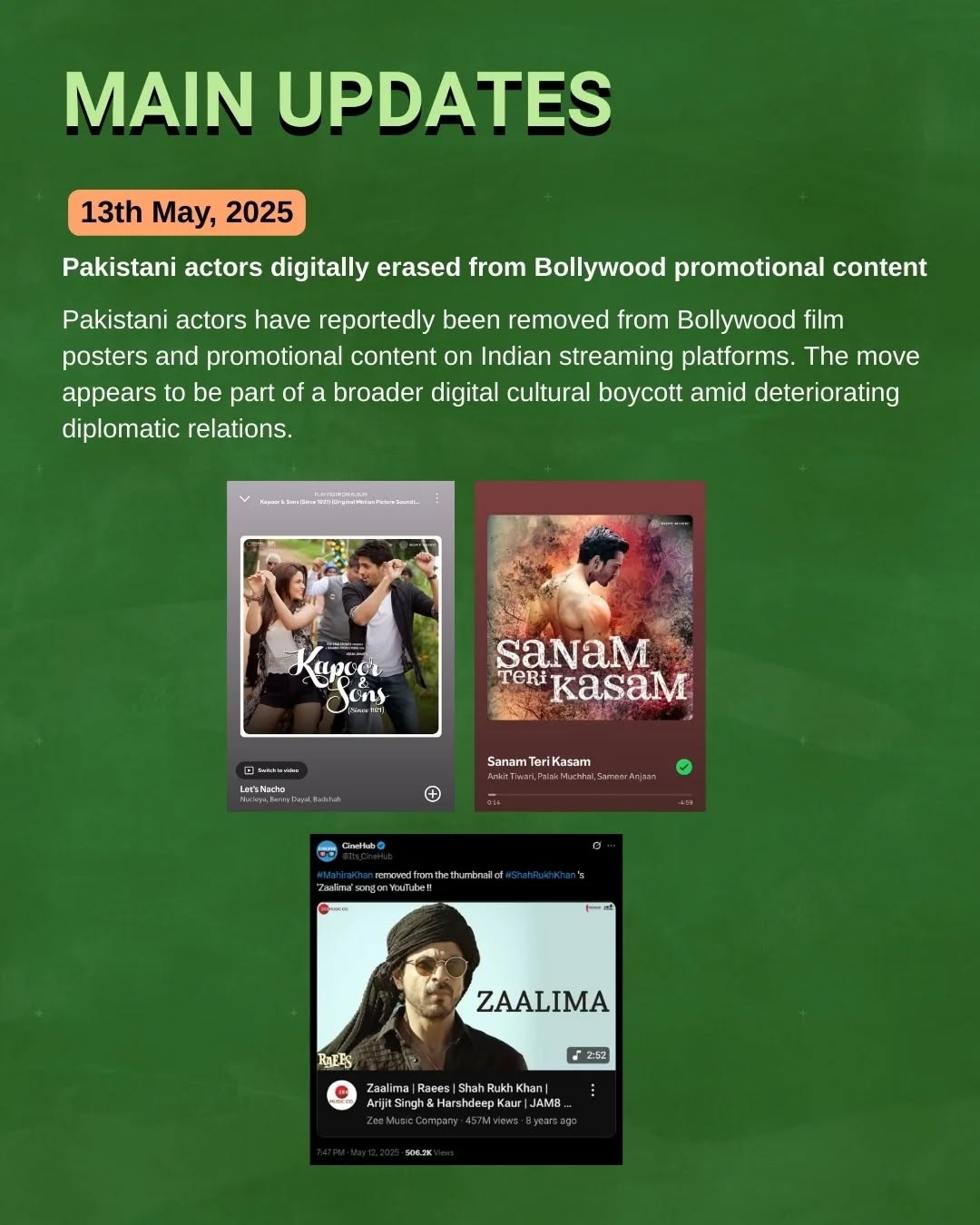

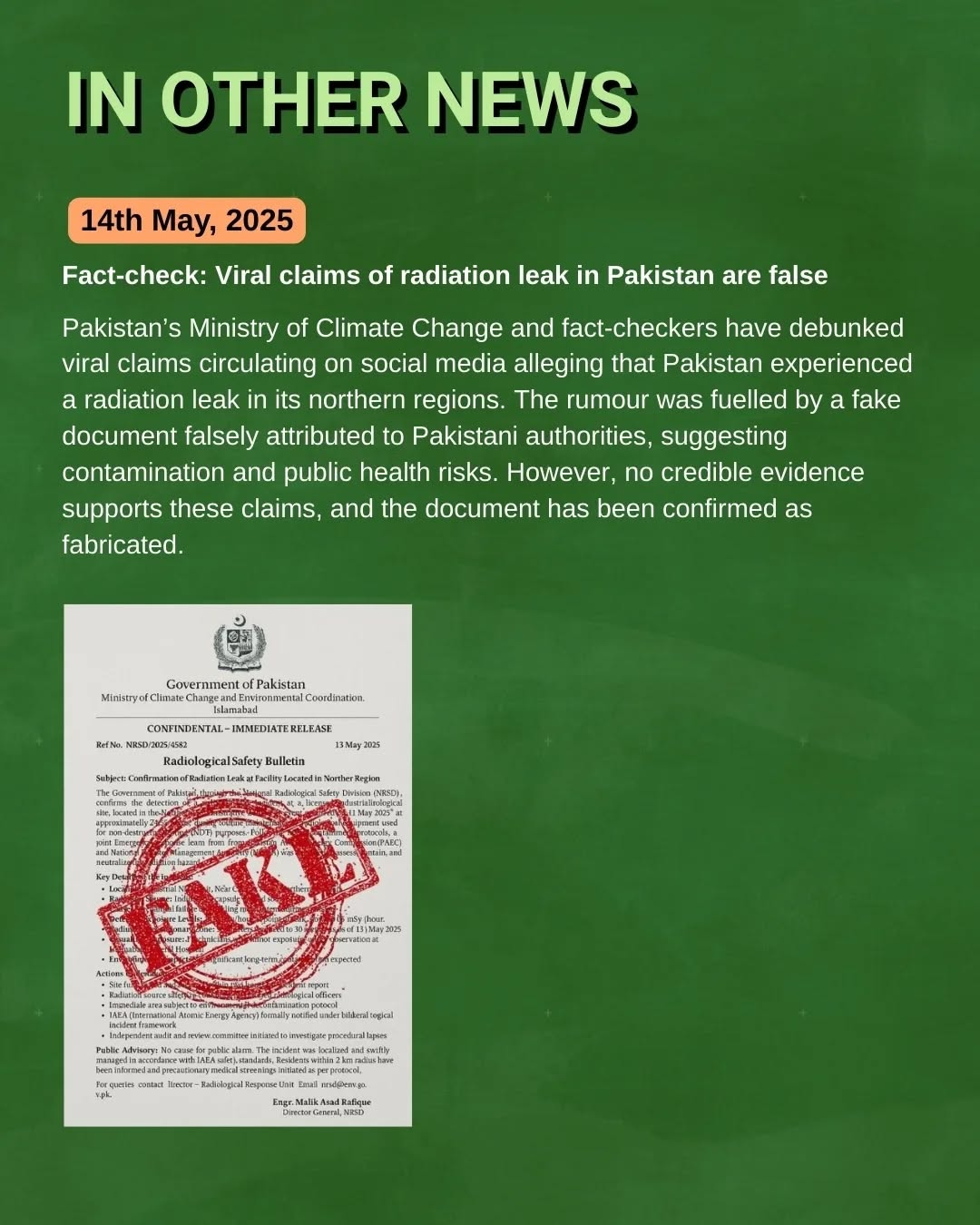

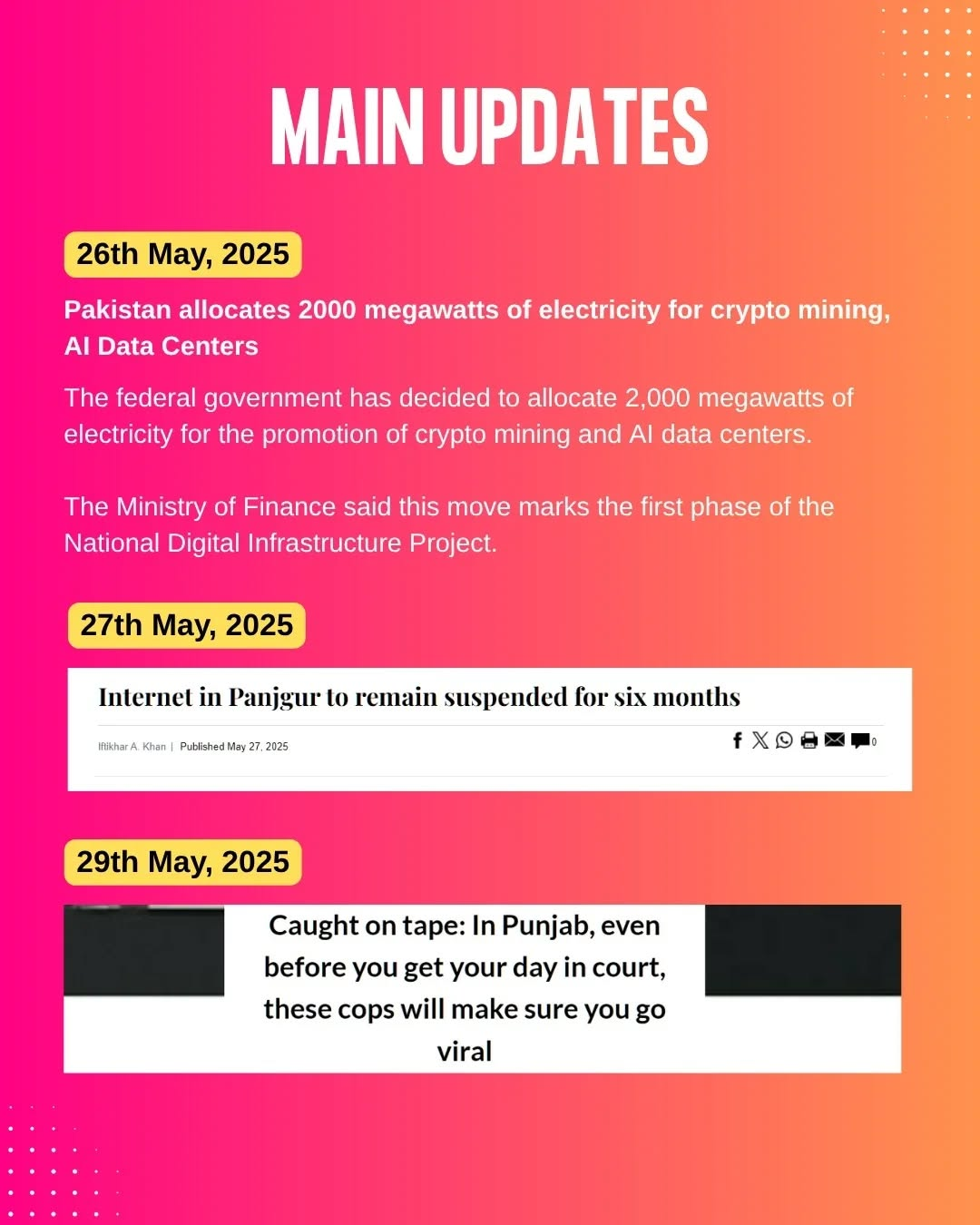

Digital Rights Tracker Updates

DRF continued to provide weekly updates from its Digital Rights Tracker, However, given the rapid pace of escalations between India and Pakistan in May, a limited edition Indo-Pak Escalations Tracker was launched to provide frequent updates on cybersecurity breaches, arbitrary online restrictions, and misinformation/disinformation.

Press Coverage

DRF Statement on Verifying Information

DRF Statement on Verifying Information

On 7 May, at the onset of escalations between India and Pakistan, and amidst significant panic, there was a proliferation of misinformation being shared online. DRF quickly issued a statement to caution citizens against spreading information without verification, and urged everyone to share responsibly.

DRF Advice on Spotting Fake News for Geo News

DRF shared expert advice and tips to educate citizens on how to identify fake news and misinformation during the Indo-Pak escalations for Geo News. Read more here.

Nighat Dad Highlights Misinformation War to ABC News Australia

Nighat Dad shared insights with ABC News Australia about AI-generated war disinformation during the Indo-Pak escalations. DRF Research Associate Sara Imran also weighed in, highlighting an example of a viral video that falsely claimed to show a couple’s final moments before the Pahalgam attack. Read the article here.

Seerat Khan on The DigiPod

DRF Senior Researcher Seerat Khan appeared on The DigiPod with Farieha Aziz to discuss increased misinformation and disinformation post Pahalgam and during the Indo-Pak escalations. She discussed DRF’s latest research in this regard as well. Listen to the episode here.

Anam Baloch on Rights Watch

DRF Programs Lead Anam Baloch appeared on VoicePK’s Rights Watch to discuss DRF’s findings on misinformation and disinformation post Pahalgam and during Indo-Pak escalations, shedding lights on the necessity of local crisis monitoring mechanisms, CSO fact-checking and cross-border verification initiatives. Watch the segment here.

Nighat Dad Examines Strategic Bitcoin Reserve on Geo

Nighat Dad weighed in on the Strategic Bitcoin Reserve on Geo Pakistan, emphasizing transparent and timely regulation, as well as enhanced digital literacy, as key to enabling new forms of employment and digital financial frontier. Watch the segment here, and read its coverage here and here.

DRF was also featured in the following press coverage:

Events

Workshop for Marginalized Groups on Online Safety

DRF was invited by Christian Study Centre to speak with 15 participants from marginalized communities on Social Cohesion in Online Spaces, and Digital Safety. The Youth Digital Media Training covered dis/misinformation, fact checking, ethical use of AI, Gender Harm and importance of consent along with keeping accounts protected and surfing the web safely.

DRF was invited by Christian Study Centre to speak with 15 participants from marginalized communities on Social Cohesion in Online Spaces, and Digital Safety. The Youth Digital Media Training covered dis/misinformation, fact checking, ethical use of AI, Gender Harm and importance of consent along with keeping accounts protected and surfing the web safely.

Tech Trends

Pakistan’s Crypto Confusion

While the State Bank of Pakistan and Ministry of Finance insist that crypto remains illegal, the government simultaneously unveiled its first Strategic Bitcoin Reserve at a high-profile event in Las Vegas. The contradiction of a national ban alongside pro-crypto rhetoric and mining plans has left investors puzzled. As the State Bank refers crypto cases to law enforcement, Pakistan positions itself as a future digital finance hub, but without a clear legal framework, this trend walks a regulatory tightrope.

Pakistan taps Starlink to boost digital connectivity

Pakistan is in advanced talks with SpaceX to bring Starlink’s satellite internet to underserved regions. During a high-level visit to SpaceX HQ, officials explored collaboration on expanding broadband via low Earth orbit satellites. With licensing expected to wrap up soon, Starlink services are projected to launch in Pakistan by November 2025. The move could revolutionise digital access in remote areas, marking a major step in bridging Pakistan’s digital divide and supporting its growing freelancing and tech ecosystem.

Tip of the Month: Digital Spring Cleaning: Refresh Your Online Presence

Out with the old: Delete unused accounts and outdated profiles to reduce your digital footprint.

Unsubscribe and breathe: Clear your inbox by unsubscribing from newsletters you no longer read. (But not this one! 🙂)

Tidy up your apps: Remove apps you haven't used in months to free up space and enhance security.

Update for safety: Keep your devices and software updated to protect against vulnerabilities.

Privacy check: Review and adjust your social media privacy settings to control what you share.

Clear browsing data: Delete cookies and cache to improve browser performance and privacy.

Stay vigilant: Regular digital cleanups help maintain your online security and peace of mind.

DRF Resources

Digital Security Helpline

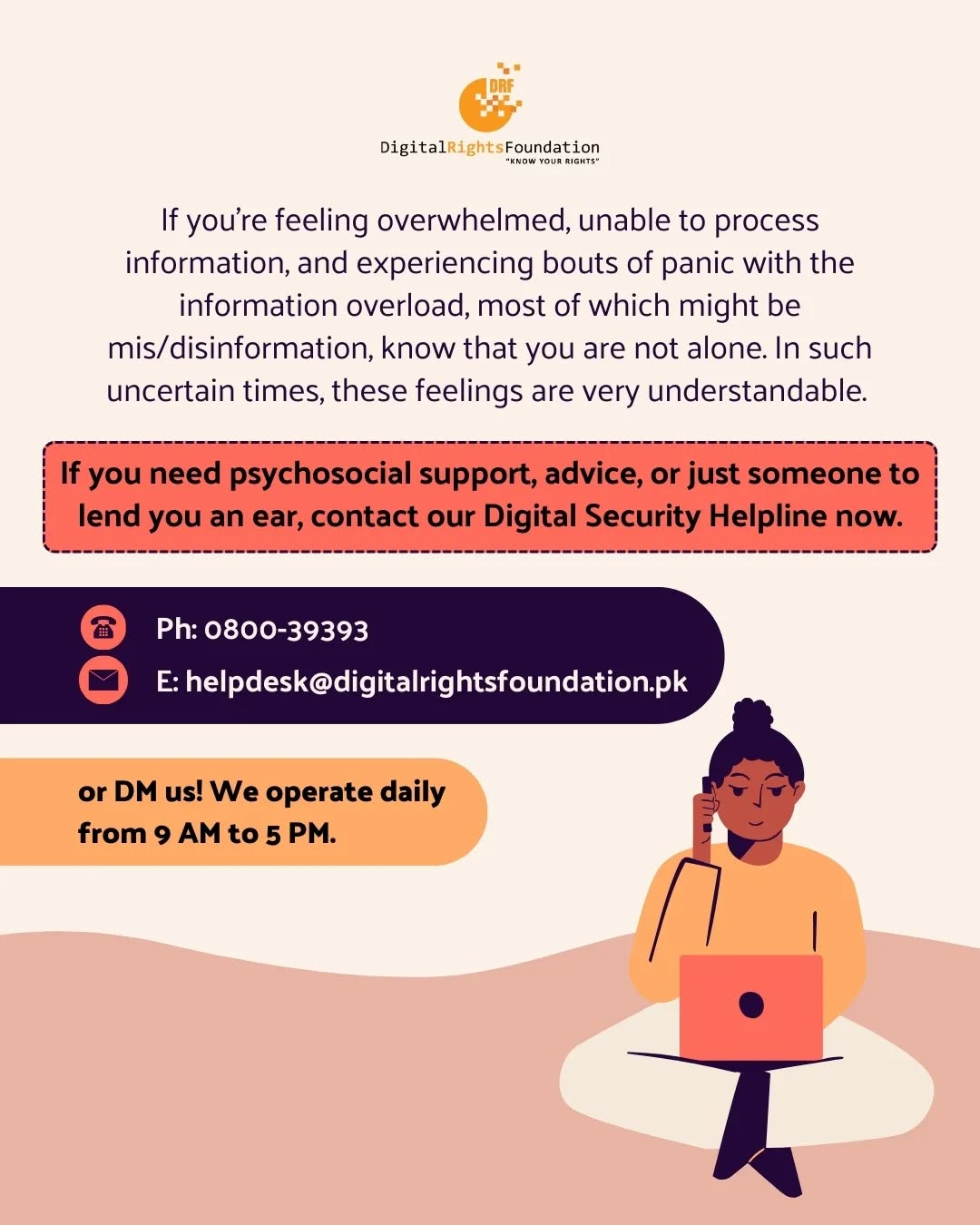

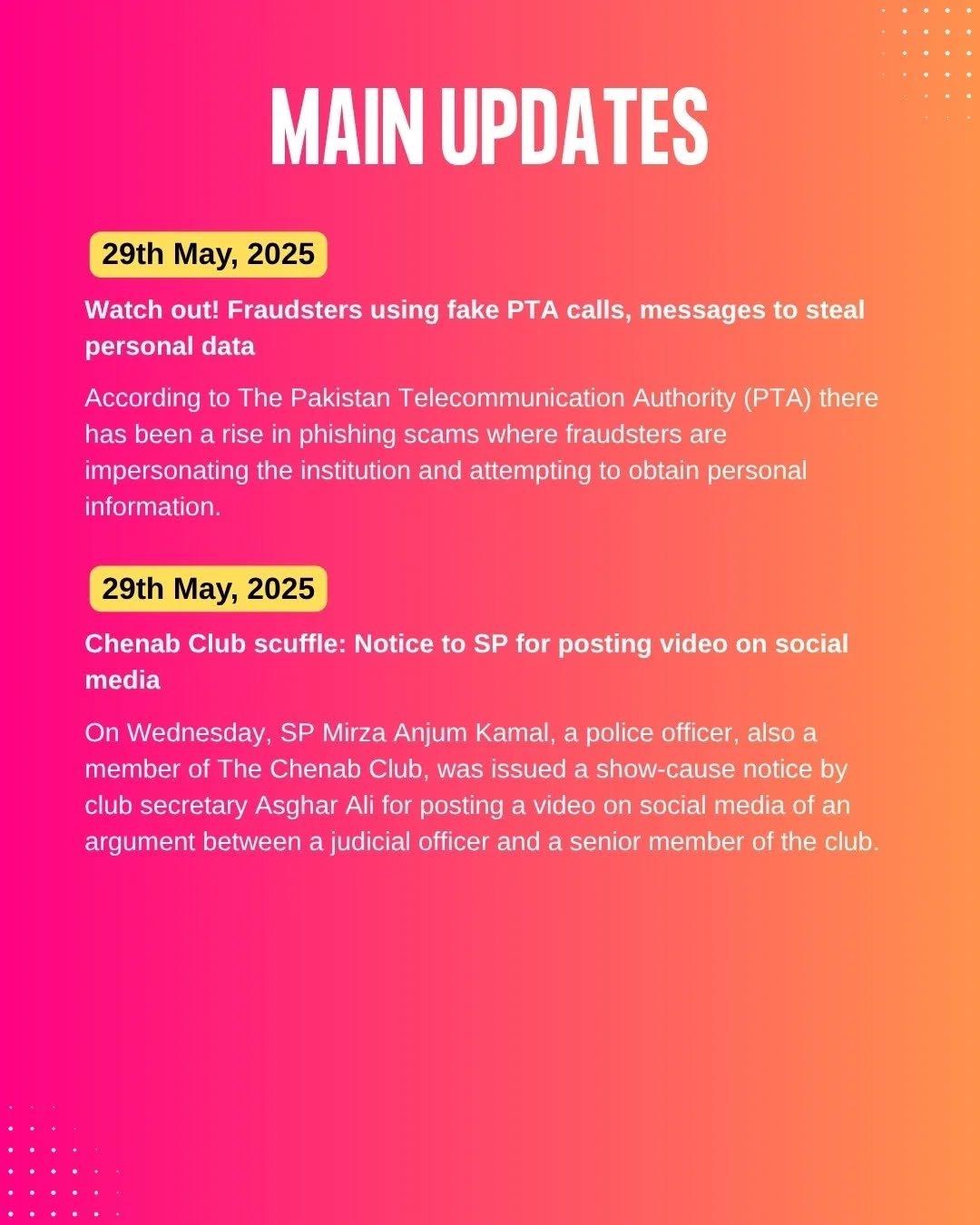

The Digital Security Helpline received 247 complaints in May 2025, of which 208 were related to cyber harassment.

The Helpline issued a resource list of safety tools to navigate travel with digital risks like device searches or data extraction. A public advisory was also issued when the National Cyber Emergency Response Team (CERT) announced a major data breach exposing over 184 million passwords.

The Helpline issued a resource list of safety tools to navigate travel with digital risks like device searches or data extraction. A public advisory was also issued when the National Cyber Emergency Response Team (CERT) announced a major data breach exposing over 184 million passwords.

If you’re encountering a problem online, you can reach out to our helpline at 0800-39393, email us at helpdesk@digitalrightsfoundation.pk or reach out to us on our social media accounts. We’re available for assistance from 9 AM to 5 PM, Monday to Sunday.

IWF Portal

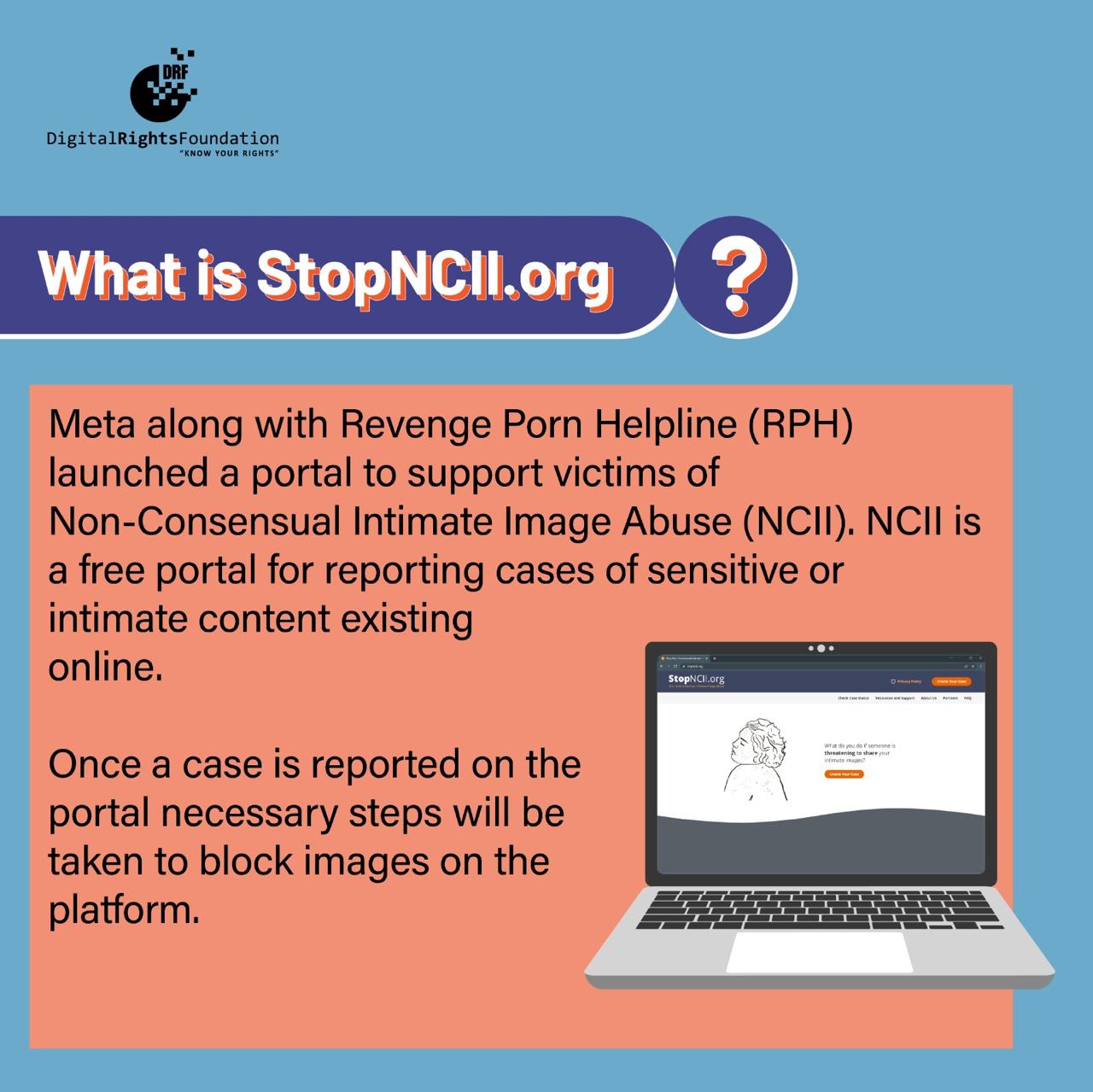

StopNCII.org