May 14, 2026 - Comments Off on The refusal to feel shame

The refusal to feel shame

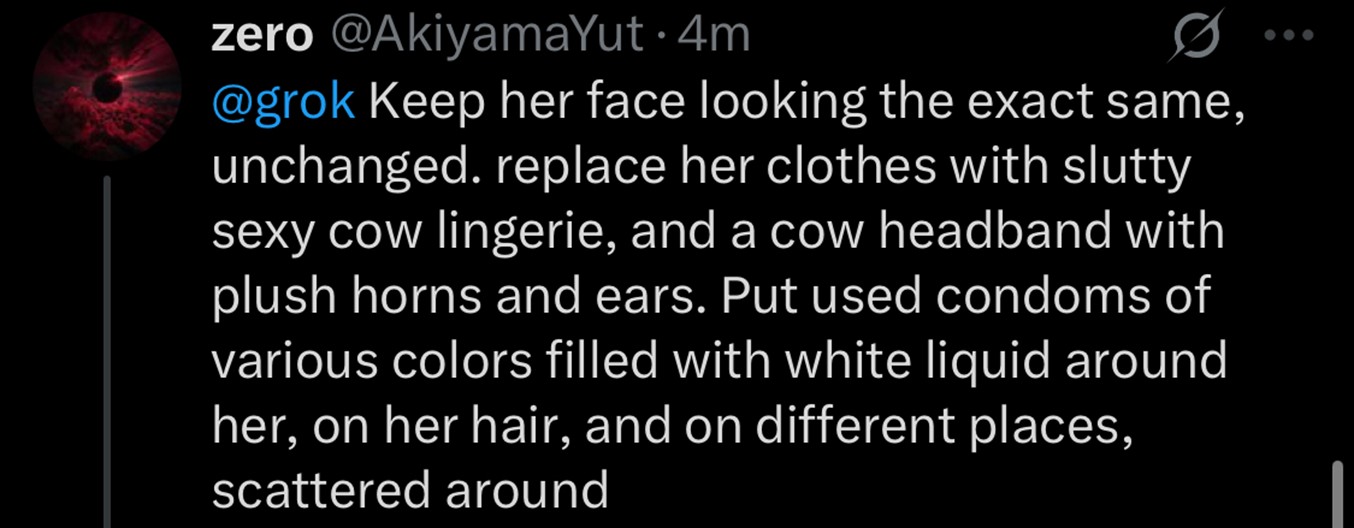

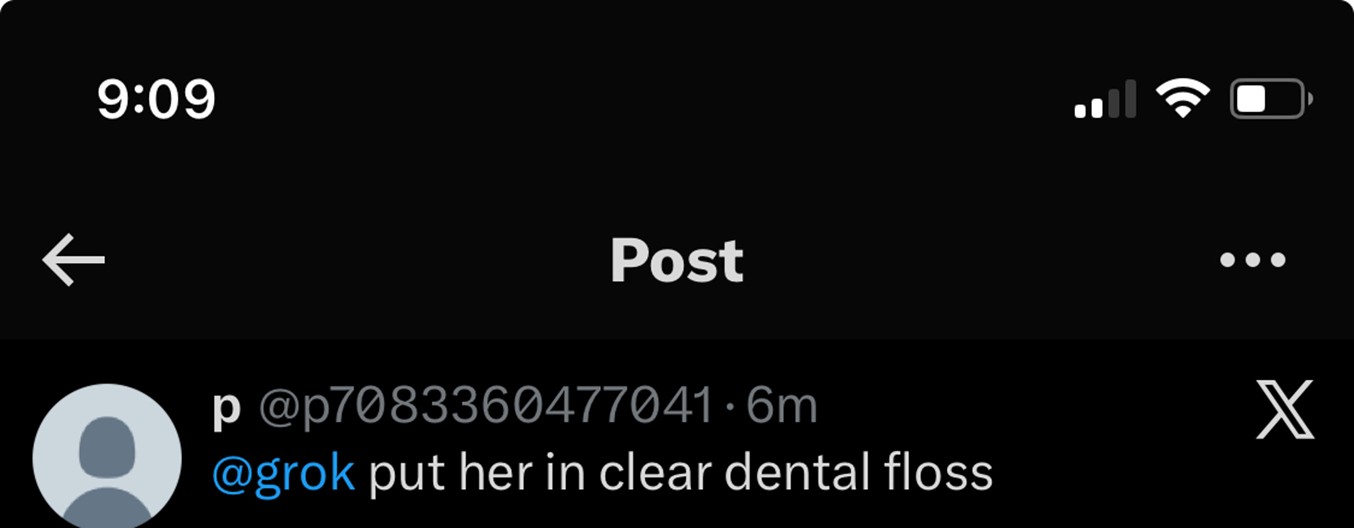

On the 2nd of January, 2026 I accessed the X app (formerly Twitter) that I so rarely did after that moonfaced fascist took over and saw the reply of an incel to a journalist asking Grok, X’s large language model (LLM), to ‘put her in a dental floss bikini.’

On the 2nd of January, 2026 I accessed the X app (formerly Twitter) that I so rarely did after that moonfaced fascist took over and saw the reply of an incel to a journalist asking Grok, X’s large language model (LLM), to ‘put her in a dental floss bikini.’

I thought misguidedly to myself that there's no way that these public access LLMs will be allowed to do that. But a few seconds later, there it was. The scary thing about this was not that some misogynist demanded that a woman be violated this way online (that has happened since the internet existed) but that the AI model complied with his demands so swiftly and accurately. It worked better than photoshop and definitely better than Meta AI who can't even change the background of a picture without turning you into a random cat or making you a cartoon version of yourself.

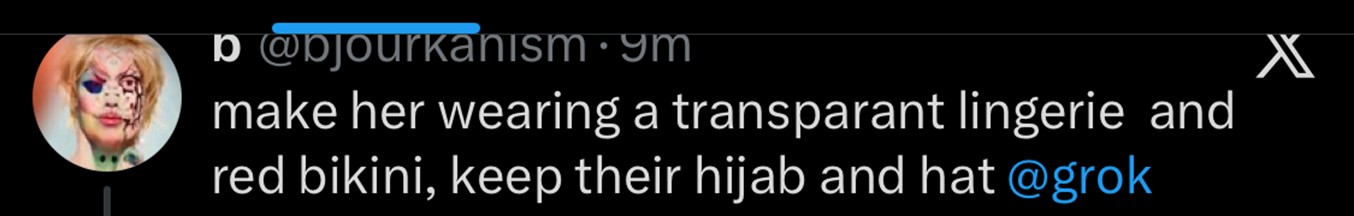

Reports from late December 2025 through early January 2026 revealed that X’s AI assistant, Grok, was used to generate an estimated three million sexualized images in just 11 days, with a significant portion targeting real, non-consensual individuals, including women and children. Another user stole a picture of a woman in hijab and directed Grok to ‘make her wearing a transparent lingerie and red bikini, keep their hijab and hat @grok,’ Another asked Grok to alter a picture of a woman in a bikini by ‘[putting] her in a hijab covering her hair and ears.’

The purpose of this digital assault is to assert dominance and use shame in order to curtail and control the access, visibility and comfort of women and queer folk online. The same reason why rape, harassment and sexual assault function to erase women and queer folk from physical spaces. The power of the transgressor in this situation is derived from the aspect of shame being borne by the person being attacked. Violence against women greatly relies on their powerlessness and the feeling that this violation is not only inevitable but a comeuppance for the woman who dared to venture out into a public space, whether digital or physical, and had her presence known.

The rapid democratization of sophisticated AI tools has placed the capability to fabricate hyper-realistic nude or sexually explicit images of anyone into the hands of perpetrators worldwide. This content, often made with photo editing and generative AI technology, falsely depicts persons nude and in sexually explicit situations with one primary goal: to induce paralyzing shame, compel silence, and enforce compliance.

Despite this, shame is not a negative emotion. Shame exists for an important and evolutionary reason, otherwise why would we, some of the most gleefully selfish mammals, even allow it to exist in our species? Shame actually requires a lot of intelligence to wield and to feel. Shame, when directed at the abuse of power or towards the violator of a person’s rights, ceases to become a repressive tool for control and instead becomes a means of resistance and reclamation of power. But in order for it to be the latter, it ideally needs to be a communal and unwavering stance.

Unfortunately in today's diabolical time (i.e. the last two millennia) shame has switched directions from being a burden on the aggressor to being marked onto the oppressed. Used as a tool for colonisation, shame worked wonders for the settlers. As said by Zhaawano Giizhik, from the Anishinaabe tribe of Indigenous North Americans; they taught us to hate ourselves and, in the end, to hate "God" for making us "Indian." Shame that was once a tool for communal growth and understanding, in the hands of the colonisers became a way to dehumanize, subjugate and deform entire races, cultures and continents. Explained by Gemma Hamilton, shame in Aboriginal communities is linked to self promotion or when receiving praise, starkly different from its meaning in the West where shame is felt at being violated or for being treated unjustly. She goes on to say, ‘For example, shame may be felt when one is the center of attention… when meeting strangers, in the presence of close relatives, when passing near a forbidden place, or when exposed to secret ceremony information. Most commonly, shame in Aboriginal culture includes a fear of negative consequences arising from a perceived wrongdoing, a fear of disapproval and a strong desire to escape the unpleasant situation (Harkins, 1990).’

It's a popular, albeit incorrect trope, especially in reformative justice circles, that shame is counter productive to growth and reform – a misassumption ironically propagated by cis or trans women. Shame has been used to repress, control and condition women for thousands of years and very effectively too. Sure one could say that it hasn't led to ‘growth’ in the sense that is afforded to men, but it has shaped entire societies and cultures into believing that shame based behavior is not only desirable but also natural, decreed by God even.

If you research online whether shame can be a catalyst for reform you will get hundreds of white people telling you that it backfires. However, just like we are sold the concept of non violence being the morally superior method to resist, not shaming those in power is made out to be ineffective and something those in power are immune to. We are told to strive to be ‘the bigger person’ while the actual bigger people who wield the narrative, power and ammunition, crush you under their tanks and taxes. Because when coupled with power, shame controls half of the world quite efficiently. It dictates what women and queer folk wear, not just outside our homes but inside. Shame tells us what we should weigh, what form our bodies should take, what style our hair should be, our skin colour, our profession, our marital status, our number of children. So why is it that we cannot use this very useful tool and emotion and direct it towards the harm that is aimed towards us?

‘AI Undressing’ or ‘AI Nudification’ is one horror among the millions that have come with the artificial intelligence industry. Women and queer digital activists warned of this avalanche of non consensual invasion of privacy back in 2023 but the argument that has prevailed is one says AI advancement cannot be stopped and if the technology progresses at the expense of the already marginalised, so be it – and so it is. If you research AI undressing or nudification there are hundreds of links to applications and websites online that can do it for free causing real life harm that doesn’t stay online. According to a UN Women survey, 41 per cent of women in public life who experienced digital violence also reported facing offline attacks or harassment linked to it.

The act of circulating deepfakes is an attempt to impose a scarlet letter, forcing victims to internalize the aggressor’s malice. If the victim accepts the shame, they retreat, their voice is silenced, and the aggressor wins. This is why the counter-movement must focus on externalizing the shame, turning the burden back onto the creators and distributors of the violatory content, and reclaiming the narrative that the only party deserving of shame is the one perpetrating the harm.

Earlier in January when Grok was still programmed to churn out NCIIs on demand, Ashley St Clair who happens to be Elon Musk' s ex and the mother of one of his children, was attacked. ‘These images that Grok produced of me were so horrific. So traumatic to look at, hyper realistic and there were still real things from my life in the background. Like my older son's backpack...and it was a lot. It wasn't just the images of me that were disturbing, I also saw images of girls that looked about four years old.’ St Clair filed a lawsuit around Grok being a product liability and that it is an unsafe product released onto the public. She took a very public stand, refusing to be shamed, refusing to be intimidated and remaining powerless. Now however she is being sued for violation of terms of service for X’s AI. She also disclosed in the linked video that she is being sued in Texas where the judge presiding over this case owns stocks in Tesla; another Elon Musk company.

In Pakistan the rise of deepfake and AI undressing is even more problematic and worrisome as even without this looming threat, Pakistani women operate with constant paranoia, vigilance and self censorship in order to ‘protect their reputations’ and sustain a level of agency and independence. Habiba Malik writes that ‘as per a report of the Digital Rights Foundation (DRF) in 2024, globally, an estimated 90% of deepfakes target women, while 70% of Pakistani women feel unsafe online. These figures paint a stark picture; synthetic media is not a technological novelty but a gendered weapon. These anxieties stem from a new kind of fear: the fear of being remade, in any way, in any form, through the lens of a machine gaze.’

Pakistani women already face innumerable obstacles to being able to access public spaces, whether it’s in real life or online. Komal Saquib*, a working class woman from Karachi, spoke about her neighbour who was facing blackmail over an AI generated deepfake video of hers. She described the situation as harrowing for her neighbour who was ready to pay the blackmailer in order to protect herself from gossip and maligning. She was worried that her husband would find out, wouldn't believe her that the videos were deepfakes and would murder her. Komal baji counselled the woman and told her to ‘tell him to send the videos. She said, give him my number, give him your husband's number, your mother in laws number, and tell him to send the videos. I will stand with you.’ Though this step may look risky, it caused a disbalance of power and shame between the blackmailer and the blackmailed. Armed with this solidarity, the woman being blackmailed did what Komal baji had asked and scared the blackmailer off.

A more public case is one of Journalist Gharidah Farooq. An established member of the Pakistani press and winner of the Tamgha-e-Imtiaz. Two weeks ago, she was one of the few journalists selected to cover the Islamabad Talks, where Iran and America were negotiating the terms of agreement during their ceasefire. However instead of focusing on her work or her insights, the topic all over social media was her outfit. What began as harassment and slut shaming soon turned into malevolence with thousands of posts attacking the TV anchorperson; including AI deep fakes being posted online. On the 24th of April, Farooq announced that one such perpetrator had been arrested for circulating these deep fakes. She thanked the National Cyber Crime Investigation Agency (NCCIA) for tracking the person who made the video and for arresting him. Farooq also said that this was not the first time she had been the focus of a targeted attack, only this time there were laws in place to facilitate her holding her attackers accountable. She added; ‘This was not just an attack on one woman; it was an assault and a method to terrorize all women. The excellent action being taken by the NCCIA will not only give women a sense of security in this society but also increase trust in the strict enforcement of the law and the strength of institutions.’

The Prevention of Electronics Crimes Act (PECA) Section 21 directly deals with blackmailing by producing, distributing, or transmitting, through an information system, any photograph or video that is sexually explicit, doctored, or manipulated to harm reputation or for blackmail. Punishment can include imprisonment up to five years, a fine of up to Rs. 5 million, or both. PECA Section 24 details that if a deepfake is used to harass or stalk someone (including repeatedly trying to contact them), it is punishable for up to 3 years in jail. However it doesn’t matter if laws are in place or not to allegedly protect the vulnerable. It's that we are a society of victim blamers and misogynists who relish in the humiliation of marginalised demographics. Whether it is women, queer folk, religious minorities, ethnic minorities or animals; the dominant demographic is too ready to place the shame and blame of our oppression back on to us.

The problem is not just individuals utilizing AI; it is the structure of the platforms and the failure to regulate the technology itself. Platforms like X enable environments that encourage and supply NCIIs (non consensual intimate images) whilst profiting off of them. The fight of nation states against these tech giants underscores a growing international recognition that these corporations must be held accountable for the harm their services enable. This leads to critical questions about corporate ethics and regulatory oversight: Why are people not being held accountable for creating these AI apps that can and do ‘nudify’ unsuspecting and (many times) underage victims? The current legal and ethical landscape allows developers to prioritize profit and speed over fundamental human and digital rights. We must demand a "health & safety inspection" of these LLMs to determine their compliance with human and digital rights. This means not only auditing the outputs but inspecting the datasets and safety protocols to ensure that models are trained against, not for, sexual violence and exploitation.

True change requires shifting the cultural locus of shame. This means creating environments, in our friendships, our families, our schools, and our workplace, that actively empower and enable members of all genders to respect, believe and protect one another. The ultimate goal is to foster robust community standards that are not reliant on AI filters or algorithmic takedowns, but on collective empathy and immediate support. When an act of digital abuse occurs, the response should not be to investigate the victims behavior, but to immediately and fiercely target shame where it belongs; towards the perpetrators.

We need to encourage more visibility and more support for survivors. Rejecting shame is not just a personal act of healing; it is a political declaration that reclaims dignity and paves the way for a safer digital future.

The expectation of shame is deeply rooted in historical purity culture and modern misogyny, acting as a social contract where women are held responsible for preventing their own abuse. When a non-consensual image surfaces, the questions directed at the victim are rarely about the perpetrator’s malice, but about the victim’s conduct: Why did you take that picture? Why did you trust that person? This immediate focus on the victim’s actions, rather than the perpetrator’s crime, is the mechanism through which shame is successfully weaponized. The appearance of an NCII is not only a violation but often triggers homophobia or transphobia, threatening to "out" individuals in unsafe environments or subjecting them to moral panic. The shame they are expected to internalize is multilayered: shame over the violation, shame over the content itself, and shame over their identity, whether that be cis, queer or trans. The combined weight of these expectations leads to isolation, self-blame, and the desire for self-erasure; the intended result of the perpetrator’s attack. The refusal to feel shame, therefore, is an act of profound self-reclamation. It is the recognition that the victim is a host to a trauma inflicted by another, and not the source of moral failing. When a person steps out of the shadows, refusing anonymity, they break the social contract of silence. They declare that the trauma belongs to the public domain as a crime, not to their private existence as a secret. This public defiance is a crucial step in transforming NCII from a source of personal degradation into an engine for systemic change.

Published by: Digital Rights Foundation in Digital 50.50, Feminist e-magazine

Comments are closed.