May 14, 2026 - Comments Off on Why must women build shadow justice systems to feel safe online?

Why must women build shadow justice systems to feel safe online?

Syeda Aima Tatheer

A woman matches with someone on a dating app. The conversation progresses quickly, he expresses serious intentions, talks about marriage, and creates a sense of emotional closeness. Weeks later, he disappears without explanation. In other cases, the same individual may be found interacting with multiple women simultaneously or hiding an existing marriage. These are not isolated incidents but recurring patterns reported by women in online spaces.

Digital platforms are often considered spaces of choice and freedom, particularly for women in restrictive social contexts. However, in Pakistan, these spaces increasingly reproduce the same gendered inequalities in the online space as well. Rather than reducing risk, digital matchmaking platforms have reorganised it, shifting responsibility for safety onto women while allowing harmful behaviour to operate with minimal accountability.

In a context where opportunities for interaction are limited, dating platforms such as Bumble, Muzz and other social media groups like “Two rings official” have become key sites for partner search. Yet for many women, these platforms function less as spaces of opportunity and more as environments of calculated vulnerability. In this regard, women in Pakistan are building their own justice systems, not in courts, but in WhatsApp groups and closed Facebook communities. These systems do not exist by design, but by necessity. They emerge in response to digital environments where safety is uncertain, accountability is weak, and harm is difficult to prove.

Methodology

This article adopts a qualitative research approach to examine women’s experiences with digital matchmaking platforms in Pakistan. Data was collected through analysis of user-generated content across multiple online spaces, including:

- User-generated content in closed Facebook groups, focused on matchmaking and safety.

- Post and discussion on X (formerly Twitter).

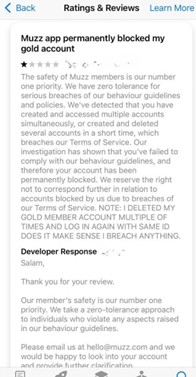

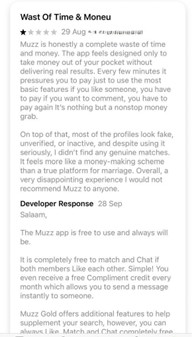

- User reviews of the Muzz application on app stores.

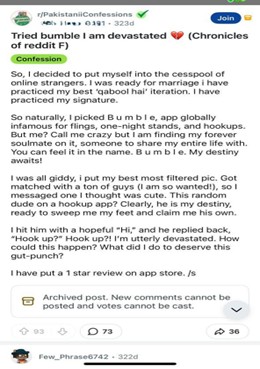

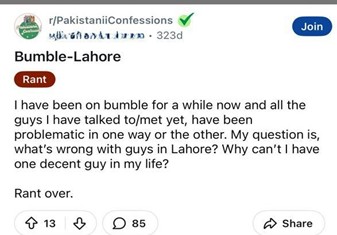

- Reddit threads related to confessions/muslims dating and matchmaking experiences.

- Public discussions on platforms such as Bumble and Muzz.

To support this analysis, selected examples of user-generated content (including Reddit discussions, app store reviews, and anonymised interaction screenshots) are presented below to illustrate recurring patterns observed across platforms.

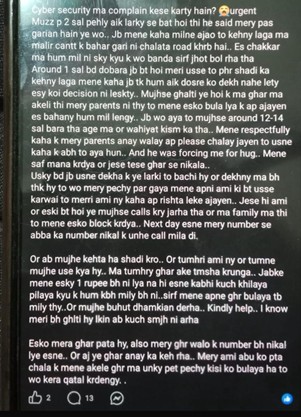

| Screenshots of Reddit discussions reveal a consistent mismatch between users’ expectations of marriage and actual experiences on dating platforms. |

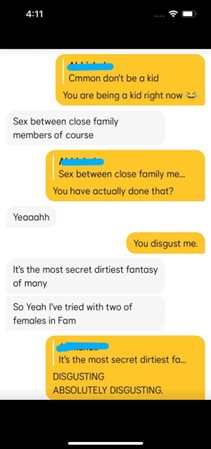

| Anonymised interaction examples demonstrate inappropriate behavior and safety risks encountered by users. |

These platforms were selected to analyse community-based discussions based on personal experiences, identify risks, and safety-related information shared by women.

The analysis focuses on pattern identification across multiple platforms to understand how repeated behaviours shape user experiences and perception of risk. These patterns were not treated as isolated incidents but as part of broader interactional structures within digital platforms. Due to the sensitivity of the topic and ethical considerations, all the examples have been anonymised.

How gendered power operates in the digital space

The experiences of women on Muzz and Bumble platforms indicate that men and women do not operate with equal power. While both are present in the same digital space, their risks, responsibilities, and consequences are very different. Women often enter these platforms with the intention of finding a serious relationship or marriage. In contrast, many men misrepresent their intentions, hiding marital status, engaging in multiple parallel conversations, or using emotional manipulation to maintain control. Across Reddit discussions, Facebook group posts, and app-based conversations, women consistently describe practices such as love bombing followed by sudden withdrawal or ghosting. These patterns do not simply create emotional dependency; they structure interactions in ways where emotional investment can be extracted without accountability.

These patterns are not hypothetical; they are actually reflected in lived experiences shared by users. One woman describing her experience on a dating platform wrote:

“I met someone through this app and assumed we were moving towards marriage. I waited for four years for him to involve his family. Recently, he told me his parents were pursuing someone else. It has been emotionally devastating.”

While individual experiences may vary, what stands out is not just the outcome, but the structure of interaction, rapid emotional investment, prolonged uncertainty, and eventual withdrawal or revelation. Such cases highlight how emotional commitment is encouraged without accountability, leaving women to bear the consequences.

At the same time, women are expected to manage interactions carefully. They assess risks, verify identities, and remain cautious even during uncomfortable conversations. This creates a form of emotional labour, where women are continuously required to assess risks, verify identities and manage potential harms.

These patterns reflect an interactional structure where emotional engagement is encouraged but accountability is absent. The architecture of these platforms, characterised by quick matching, private chats, and easy exit, allows users to move between connections without reputational consequences. For men, this creates flexibility; for women, it creates uncertainty. Emotional investment becomes asymmetrical, where one party can withdraw without explanation while the other is left managing the consequences.

Taken together, these dynamics show that gendered power in digital matchmaking is not simply about behaviour but about how platforms enable certain actions to occur without consequence while placing the burden of safety on those most at risk.

Gaps in Platform Moderation and Reporting Mechanism

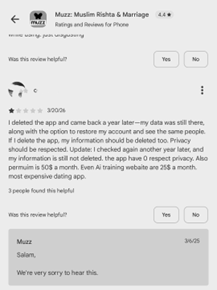

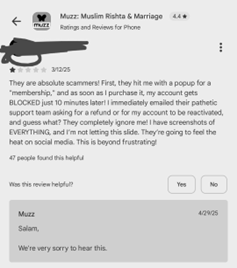

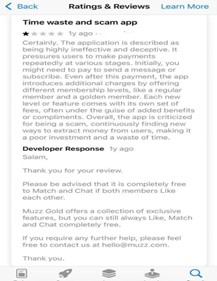

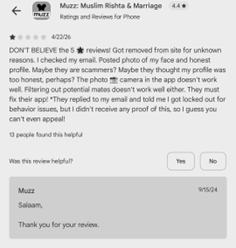

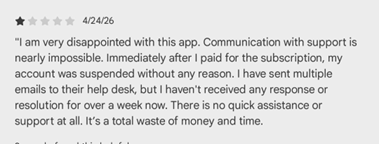

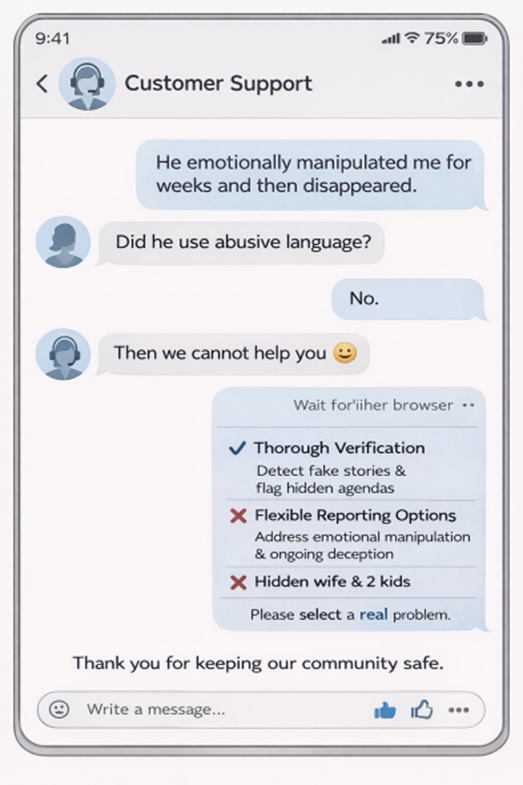

Dating apps like Muzz market themselves as “Halal” spaces for serious commitment, but the reality for women is a landscape of calculated vulnerability. These platforms present themselves as safe and regulated environments; however, their systems often fail to address the realities women users face. User reviews of the Muzz app on app stores highlight weak moderation, delayed responses, and generic AI-based replies that do not resolve actual issues (Trustpilot reviews; Reddit user discussions). Reporting mechanisms are limited and designed for hard violations such as harassment or spam. However, they do not account for the ‘grey area’ harms experienced by women, such as breadcrumbing, love bombing, emotional manipulation, deception, or coercion, which often fall outside predefined categories. As a result, no meaningful action is taken.

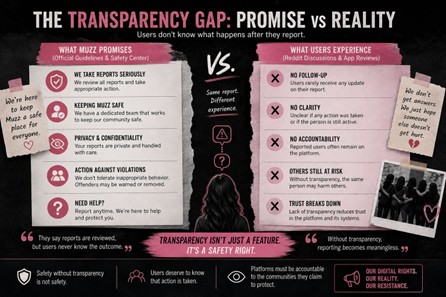

There is also a lack of transparency in how users' reports are handled. According to the Muzz’s Official safety guidelines, the platform states that reports are reviewed by a dedicated moderation team and that appropriate action is taken based on evidence. However, the platform does not clearly mention whether reporters will be informed of the outcome, nor does it specify any obligation to provide feedback or status updates. Analysis of Reddit discussions and user reviews of the Muzz app indicates that users rarely know what happens after they report someone, whether any action was taken, whether the person is still active, or whether others remain at risk. This opacity weakens trust and reduces the deterrent effect of reporting systems.

In the above screenshot, a user complained that the platform has permanently blocked their gold account. As well as, the platform explicitly refuses further communication, which is a direct contradiction to the user's expectation of a fair process.

Verification systems are equally weak. User reviews of the Muzz and Bumble apps and discussion on Reddit highlighted concerns of encountering fake profiles, hidden marriages, and false claims about jobs or intentions. In some cases, women only discover the truth after investing months in conversations. This makes deception a low, risk, high, reward strategy within these platforms. These limitations reveal that platforms are designed to manage risk for themselves rather than for users.

discussion on Reddit highlighted concerns of encountering fake profiles, hidden marriages, and false claims about jobs or intentions. In some cases, women only discover the truth after investing months in conversations. This makes deception a low, risk, high, reward strategy within these platforms. These limitations reveal that platforms are designed to manage risk for themselves rather than for users.

By narrowing what counts as actionable harm, they reduce their responsibility while leaving users exposed to forms of harm that are harder to define but equally damaging.

More importantly, platforms treat harm as isolated incidents, while women experience safety as continuous and pattern-based. Instead of preventing harm, platforms shift the burden onto users to identify, interpret, and report it. When harm is not easily categorised, it effectively becomes invisible within the system. Beyond individual interactions, these patterns are reinforced through normalisation. Across multiple Reddit threads, Facebook group discussions, and app-based conversations, women described that behaviours such as ghosting, manipulation, or maintaining multiple relationships are not only common but often socially accepted among men. In some cases, such behaviour is even framed as experience or smartness, rather than misconduct.

This contrast between platform promises and user experiences can be summarised as follows:

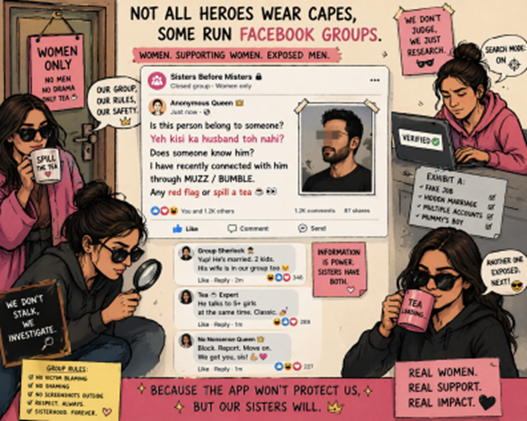

This normalisation reduces accountability at a collective level. When harmful behaviour is expected or trivialised, it becomes harder to challenge and easier to repeat. As a result, women are not only navigating individual risks but also a broader environment where those risks are normalised and reproduced. In response to these structural gaps, women are creating their own systems of safety and accountability. Closed groups on Facebook and WhatsApp have become spaces where women share experiences, verify identities, and warn others. A common practice is posting a man’s profile and asking: “Does anyone know him? Is he already married? Any red flags?” These questions reflect the absence of reliable verification mechanisms within platforms themselves.

Through these networks, women identify patterns of behaviour such as repeated ghosting, emotional manipulation, or deceptive intentions. What may appear as an isolated interaction within a dating app becomes a recognisable pattern when shared across multiple users.

In many cases, a single post generates multiple responses within hours, with other women sharing similar experiences or additional information. This process turns fragmented individual experiences into collective pattern recognition. Unlike platform reporting systems, which isolate complaints, these networks aggregate them, making it easier to identify repeat behaviour.

Analysis of Facebook closed group posts and group interactions suggests that these spaces operate through informal rules, norms, and trust mechanisms. For instance, some groups require verification before allowing posts, while others encourage evidence-based sharing through screenshots. These practices indicate that these networks function not as disrupted spaces, but as evolving systems with their own internal logic of accountability.

More importantly, these networks redistribute power. Instead of relying on institutional validation, women produce their own systems of credibility based on shared experience and collective memory. They move from being isolated users to active participants in a collective system of knowledge production. At the same time, these networks provide emotional and psychological support. Women share experiences of confusion, anxiety, and emotional trauma, and receive validation and advice from others. In many cases, these spaces become safer than the platforms themselves.

These informal networks, therefore, function as parallel governance systems, which can be understood as “shadow justice systems”[1], emerging not out of preference, but necessity.

Despite their effectiveness, these systems operate within complex ethical and legal boundaries. The sharing of names, profiles, and screenshots can lead to reputational harm, especially if the information is incomplete or inaccurate. There is no formal mechanism to verify claims or provide the accused with an opportunity to respond. This raises concerns about fairness, misuse, and the absence of due process.

From a legal perspective, these practices exist in a grey zone shaped by the Prevention of Electronic Crimes Act (PECA) 2016 and related cybercrime provisions. While the law includes clauses on defamation, unauthorised use of information, and online harassment, it is not designed to address the relational and pattern-based harms women experience on digital platforms.

One key gap is that legal frameworks tend to recognise harm only when it is explicit, individual, and provable. Subtle but damaging patterns, such as emotional manipulation, deception, or sustained psychological harm, often fall outside legal recognition. This creates a disconnect between lived experiences and legal categories.

Another limitation is procedural. Reporting cybercrime requires formal complaints, evidence submission, and engagement with institutions that many women do not fully trust or feel comfortable approaching. This discourages reporting and reinforces underreporting.

Another critical gap lies in the limited awareness of legal rights and reporting mechanisms among women. Studies indicate that a significant proportion of women in Pakistan are unaware of existing cybercrime laws and the procedures required to report online harassment. This lack of awareness is not only informational but structural; many women do not know where to file complaints, how to document evidence, or how to navigate legal institutions.

Even when awareness exists, reporting is often discouraged by social stigma, fear of reputation damage, and lack of trust in law enforcement. In many cases, women initiate complaints but withdraw them midway due to pressure or procedural challenges. These barriers highlight that access to justice is not only about the existence of laws, but about the ability to use them effectively.

As a result, informal networks do not simply function as alternative spaces of support; they become primary sources of legal guidance, verification, and risk management in contexts where formal systems remain inaccessible or unresponsive.

At the same time, women who participate in informal networks may themselves be exposed to legal risk. Sharing someone’s identity or allegations publicly can potentially fall under defamation or privacy violations, even when done for protective purposes. This creates a structural dilemma: systems that fail to protect are replaced by systems that cannot be fully legitimised. Women are forced to navigate between institutional failure and legal vulnerability. These practices, therefore, should not be seen simply as misuse of digital space, but as necessary responses to gaps in accountability. Women are not choosing these systems freely; they are adapting to the absence of reliable alternatives.

Recommendations

This points out that these matchmaking platforms need to move beyond engagement, and put focus on user safety as a core structural priority. Furthermore, dating platforms like Bumble and Muzz should follow more proactive safety protocols, which includes stronger identity verification and pattern-based detection of repeated harmful behaviours. Behaviours like emotional manipulation, misrepresentation and deceptive relationship practices may be difficult to quantify but become visible through repeated patterns. The reporting system must also include a category of grey-area harms/violations. Platforms should allow users to report patterns of behaviour over time rather than isolated incidents. This will help to aggregate complaints across multiple users to identify repeat offenders. In order to strengthen trust and improve accountability, platforms should inform users about the status of the report they submitted. Even simple feedback such as ‘action taken’ or ‘account under review’ helps the users. In order to address context-specific harm, platforms must invest in trained moderation teams rather than relying on automated generated responses.

Similarly, in order to address the gap between law and real experiences, there is a need to expand the legal definition and include emotional manipulation, deception and coercive digital behaviours, which currently fall outside formal recognition. The reporting mechanism must also be more accessible and survivor-centered, by simplifying complaint processes, and user friendly guidance on evidence collection. While analysing these social networks, it was learned from user experience that there is limited awareness and understanding among women about their rights and protection under cybercrime laws. This indicates that there is a dire need to spread awareness about the cybercrimes laws and the reporting mechanisms. This requires gender sensitive training, responsive handling of complaints and confidentiality.

Informal networks help women share information, spot harmful patterns, and warn others when formal protections fail. Platforms can learn from these spaces by creating safer, anonymous ways to report concerns. At the same time, clear guidelines on responsible sharing are needed to reduce risks like misinformation or defamation while keeping these networks useful.

This redistribution of responsibility reflects broader structural inequalities rather than individual failure. The emergence of these informal systems is not a sign of dysfunction among users, but a signal of institutional failure. When platforms do not provide safety, users redesign safety for themselves. Until such changes occur, women will continue to rely on each other, not because these systems are ideal, but because they are necessary. What is often dismissed as informal or risky is, in reality, a carefully constructed response to institutional absence. The real question, then, is not why these shadow systems exist, but what their existence reveals about the failure of the systems they replace.

Visuals:

Platform Gaps

[1] A shadow justice system refers to informal, user-generated accountability networks that emerge in digital spaces when formal legal and platform governance mechanisms fail to provide timely or effective protection (Trottier, 2016; Loveluck, 2019).

Published by: Digital Rights Foundation in Digital 50.50, Feminist e-magazine

Comments are closed.